For the last few projects at thePlatform (a truly great place in almost every respect) we’ve been using the basic version of ScrumWorks. For the projects before that, we used the sticky-note-on-whiteboard approach (not without its own set of problems, of course) and the customized excel spreadhseet approach (again, with problems).

Usually, for sprint planning, one of the product owners/solution architects will fill in the uncommitted backlog ahead of time, and we just drag things around and refine estimates during the meeting.

Today, with our regular product owner gone on vacation, I put the majority of things in ScrumWorks, and it was painful.

The most recurring problem was that i had to estimate both stories and tasks before I could put them in the sprint. It totally breaks the creative flow of “oh, we’re going to need to do this, put that in there” if I get these, and there’s no other word for it, rude, dialog boxes popping up telling me that I can’t do what I just tried to do, in that I have to do something else first.

On top of that, there’s this rigid two-level hierarchy in place. If I accidentally enter something that should be a task as a story or vice-versa, too bad, I’ve got to re-enter things, and re-estimate things (in different units of measurement, no less) to appease the software.

At that point, I’m working to serve the needs of the system, and not the other way around.

The biggest revelation that I had today, which I shouldn’t complain about too much, as I only spent around 30 minutes doing it, was that this isn’t the way that I would model a software development project.

Besides the rude UI around entering stories/tasks, there’s no logic for collective ownership or load-leveling (as in, “Gee… it looks like those are all things that Kevin’s going to do and Mo has nothing”). I prefer to estimate both stories and tasks as small/medium/large than days/hours (sometimes with a translation table that says that small = 8 hours, on average, etc.)

I’ve been wanting to write my own software project management tool for years, the design of which changes as I learn more about how different teams approach software tools. I’m eager to try Mingle, from ThoughtWorks, as I trust that they know how to develop a less offensive user interface.

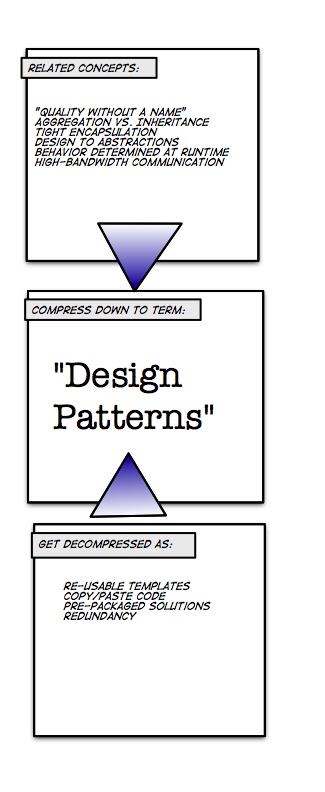

My hope is that the people developing/using these tools realize that they are essentially modeling tools. The “state of the world” in ScrumWorks is an incomplete model of the actual state of the project. Like any model, it can only approach reality, and after a particular point, you get diminishing returns with marginal verisimilitude. Also, just like I’m starting to see the Scrum process as too prescriptive (that is, “what” driven instead of “why” driven), ScrumWorks is way too prescriptive. I don’t want people telling me how to make software, and I sure as hell don’t want software to tell me how to make software.

Even though it means that I’ll have to give up a whiteboard, I might just start lobbying for the sticky notes again.